-

Price: $0.15090 2.9605%

-

Market Cap: 22.92B 0.7601%

-

Volume (24h): 1.55B 0%

-

Dominance: 0.7601% 0.7601%

-

Price: $0.15090 2.9605%

What comes after GPU power | Reasons why CPU demand will increase in the AI agent era [Zettai Noia]

Release: 2026/05/07 20:37 Reading: 0

Original author:あ みつけたわよ。旧・成れの果て戦

Original source:https://www.youtube.com/embed/HympcQeB38g

#remotion #codex #ai This is a rough explanation. Please tell me half of the story.The audio was created with irodoriTTS, trained with sbv2.7 and used with aivisspeech.Used Remotion to create a video with codex.The character of the image is the PixAIsunflower model and GPTImage2.0.kv cache is an abbreviation for Key-Value Cache.I misread it.Reference paper: https://arxiv.org/abs/2511.00739 Article: https://note.com/atom_/n/n1a3ae798251c Why CPU demand will increase in the age of AI agents When looking at the development of generative AI, many people first think of GPUs. It trains a huge AI model, generates images, generates videos, and processes large amounts of matrix operations at high speed. GPUs are the semiconductors at the heart of modern AI, and they can be said to be a symbol of AI infrastructure investment. However, when thinking about future AI infrastructure, it is important to understand that it is no longer as simple as ``GPU is powerful, so we only need to look at GPU.'' In fact, the more powerful the GPU, the more important the CPU, memory, network, storage, and scheduler around it become. This is because no matter how high-performance a GPU you have, if the mechanism for passing work to the GPU is slow, the GPU will have to wait. The key to success in AI infrastructure is moving beyond the performance of a single chip to the overall design that enables the efficient operation of huge computational resources. The essence of GPU is massively parallel computation. It is very strong in applications such as Transformer, Attention, image generation, video generation, and simulation, where a large number of the same type of calculations are processed all at once. GPUs demonstrate overwhelming performance in matrix calculations, which are the core of AI models. Therefore, GPUs will continue to play a central role in AI learning and large-scale inference. This is not the end of GPU demand. In fact, the more important AI becomes, the more important GPUs will continue to be. However, the entire AI service is not just made up of matrix operations. In actual AI services, a large amount of detailed control processing occurs, such as API reception, user authentication, request distribution, tokenization, queue management, batching, logging, billing, security, error handling, etc. These are not the huge parallel calculations that GPUs are good at, but the branching and control areas that CPUs are good at. Especially in the age of AI agents, this CPU-side processing will become even more demanding. AI agents do more than just answer questions. It does searches, opens browsers, browses databases, runs Python, reads files, calls external APIs, and if it fails, tries again. This is more like real-world paperwork than a calculation inside a model. In other words, the smarter the AI agent becomes, the more control, connectivity, decision-making, and re-execution takes place outside of the model. In this case, the CPU is not just a supporting role. The CPU is the command center that passes the next work to the model, checks the results that come back, calls other tools if necessary, and keeps the entire process moving forward. If the GPU is a gigantic furnace, the CPU is the manager who transports materials, determines the order, manages processes, and responds on-site when something goes wrong. The higher the performance of the GPU, the more important is the ability of the CPU to keep the GPU idle. This structure is clear when looking inside LLM reasoning. Inference includes prefill, which processes input sentences all at once, and decode, which generates continuations word by word. Prefill is relatively easy to parallelize and is a process that GPUs are good at. On the other hand, decoding is sequential and requires the previous result to produce the next token, so the GPU cannot always run at maximum efficiency. Here, the performance of the inference platform is greatly influenced by how requests are mixed, when they are batched, and how memory is used. Even more important is the KV cache. KV cache is a huge working memory for holding context during generation. The KV cache will expand as long text contexts, multiple users simultaneously, multiple candidate generation, and agent internal loops increase. It's mostly an OS job to allocate, free, reuse, and manage this memory hierarchically as needed. Here again, the overall design including CPU, DRAM, HBM, CXL, SSD, and NIC is tested. In other words, AI inference is evolving from a world where only GPU calculations are performed to a layered system where CPUs, GPUs, memory, networks, and storage work together. If you only look at the GPU, you will overlook clogged pipes. No matter how powerful a GPU cluster is, overall performance will not improve if scheduling on the CPU side is weak, memory bandwidth is insufficient, the network is clogged, or storage is slow. AI infrastructure needs to be viewed as a whole system, not just a single chip. The essence of the CPU is branching and control. Interrupts, exceptions, privileged modes, virtual memory, context switches, I/O. The CPU is a processor that manages a world where we don't know what's coming. Although it is inferior to GPU in terms of efficiency in processing large amounts of the same calculations all at once, it is strong in irregular processing, external connections, detailed judgments, and response to failures. This flexibility is very important in systems like AI agents, where the situation changes every time. On the other hand, TPU is a dedicated ASIC for tensor calculations. It has strengths in large stylized matrix operations, mass inference on the cloud, and computational graphs that can be solidified by a compiler. In an environment like Google where models, compilers, cloud, and hardware can be vertically integrated, TPUs become very efficient dedicated factories. However, it is not as flexible as a GPU in a field where there are many dynamic shapes, detailed branches, and unique calculations. Although it is strong in standardized processing, it has limitations in research, development, and field response, which are subject to rapid changes. LPU is a specialized engine for language inference, especially for low-latency token generation. With human-waiting chat, voice AI, short re-reasoning, and fast thought loops inside the agent, speed of response is of great value. AI that responds quickly makes the user experience feel natural. However, LPUs are unlikely to play a central role in image generation, video generation, 3D, robotics, and large-scale learning. While it is strong in low-latency language inference, it is not positioned as an all-purpose AI factory. Organized in this way, the CPU is responsible for control, the GPU is responsible for flexible massive parallelism, the TPU is responsible for fixed tensor calculations, and the LPU is responsible for low-latency language inference. What matters is not which one is the greatest. The question is which job should be entrusted to which semiconductor. AI infrastructure is moving toward a division of labor by use case, rather than a single winner dominating all. In the AI agent era, the CPU will be responsible for behavior management, RAG, DB, API, security, logs, billing, and retries. The GPU is responsible for large-scale inference and generation, the TPU efficiently processes large amounts of stylized inference, and the LPU speeds up short thought loops and conversational responses. If the CPU is weak here, the agent will get stuck every time it calls a tool. Even if the GPU is strong, if the search wait, API wait, and DB wait increase, both humans and the GPU will have to wait. Furthermore, in the age of physical AI, this structure will spread to the real world. In robots and VLA, AI needs to actually see, grab, walk, avoid, and correct for mistakes, not just on the screen. Here, the CPU is responsible for controlling the OS, ROS, sensors, motors, I/O, safe stop, authority management, logs, etc. on the body side. Even if the VLA decides to "grab the cup," the CPU is in charge of actually moving the arm safely. GPU also remains strong with physical AI. It will be important as a virtual world generation engine for learning about reality, including visual understanding, VLA learning, video generation, world models, 3D simulations, synthetic data, and digital twins. Failure examples and edge cases are very important in robot training. It is dangerous and expensive to make students fail repeatedly in real life, so we have them practice a lot in virtual space. For this reason, the GPU is not just a chat chip, but a device that allows you to create and practice worlds. In conclusion, the story of the top GPU does not end, but enters the second chapter. GPUs will continue to be at the heart of AI. However, as AI agents and physical AI spread, peripheral layers such as CPU, DRAM, HBM, CXL, NIC, SSD, and scheduler become larger. AI will no longer be a single chip, but a civilization-sized execution system. Among them, the CPU connects actions with the GPU at the center, the TPU supports routine calculations, and the LPU speeds up response. When looking at future AI infrastructure, it is important to not only look at GPUs, but also to understand the huge division of labor in semiconductors that is expanding around them.

Recent news

MORE>>-

BobbV

2026-05-08 07:20

BobbV

2026-05-08 07:20

-

oriol andre 99

2026-05-08 07:20

oriol andre 99

2026-05-08 07:20

-

HD Ultra rare coins

2026-05-08 07:20

HD Ultra rare coins

2026-05-08 07:20

-

Old world coins vault

2026-05-08 07:20

Old world coins vault

2026-05-08 07:20

-

SMART CRIPTOATIVOS

2026-05-08 07:20

SMART CRIPTOATIVOS

2026-05-08 07:20

-

코인신사

2026-05-08 07:00

코인신사

2026-05-08 07:00

-

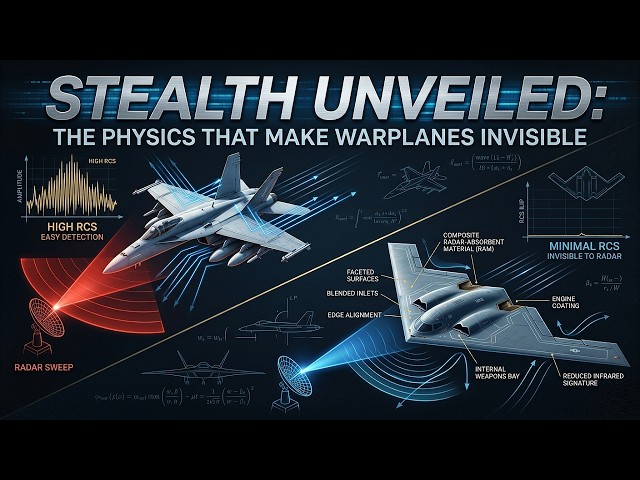

DARK AXIS MILITARY

2026-05-08 06:57

DARK AXIS MILITARY

2026-05-08 06:57

-

Economic Policy Institute

2026-05-08 06:38

Economic Policy Institute

2026-05-08 06:38

-

Coin Servisi

2026-05-08 06:38

Coin Servisi

2026-05-08 06:38

Selected Topics

-

- Dogecoin whale activity

- Get the latest insights into Dogecoin whale activities with our comprehensive analysis. Discover trends, patterns, and the impact of these whales on the Dogecoin market. Stay informed with our expert analysis and stay ahead in your cryptocurrency journey.

-

- Dogecoin Mining

- Dogecoin mining is the process of adding new blocks of transactions to the Dogecoin blockchain. Miners are rewarded with new Dogecoin for their work. This topic provides articles related to Dogecoin mining, including how to mine Dogecoin, the best mining hardware and software, and the profitability of Dogecoin mining.

-

- Spacex Starship Launch

- This topic provides articles related to SpaceX Starship launches, including launch dates, mission details, and launch status. Stay up to date on the latest SpaceX Starship launches with this informative and comprehensive resource.

-

- King of Memes: Dogecoin

- This topic provides articles related to the most popular memes, including "The King of Memes: Dogecoin." Memecoin has become a dominant player in the crypto space. These digital assets are popular for a variety of reasons. They drive the most innovative aspects of blockchain.

Selected Articles

More>>- 1 Arctic Pablo Coin (APC): Uncovering Earth's Mysteries, One Presale at a Time

- 2 New Highs For Dogecoin, Pepe and Shiba Inu In 2025 But This Crypto May Steal The Show With 10,000% Gains

- 3 Meme Coin Doge Uprising (DUP) Could 100x After President Donald Trump Announces Crypto Strategic Reserve

- 4 Dogecoin (DOGE) Price Prediction: Could Meme Coin Hit $10 by the End of 2025?

- 5 Cardano's Charles Hoskinson Has Made Some Notable Remarks About Bitcoin and Dogecoin

- 6 Cloud Mining Craze: Bitcoin, Dogecoin, and the Quest for Passive Crypto Income

- 7 Grayscale, Dogecoin, and the ETF Debut: A New Era for Meme Coins?

- 8 Rexas Finance (RXS) Could Surge 10x by 2025, Outpacing Pepe (PEPE)

- 9 Bitcoin Trader Who Made $30 Million On DOGE Predicts 6,000% Surge For This $0.0000002 AI Token – Get 80% Bonus Tokens

- 10 Dogecoin (DOGE) Price Prediction: Meme Coin Could Rally To $0.27 As It Closes Above Pre-Halving Highs

Select Currency

US Dollar

USD

Chinese Yuan

CNY

Japanese Yen

JPY

South Korean Won

KRW

New Taiwan Dollar

TWD

Canadian Dollar

CAD

Euro

EUR

Pound Sterling

GBP

Danish Krone

DKK

Hong Kong Dollar

HKD

Australian Dollar

AUD

Brazilian Real

BRL

Swiss Franc

CHF

Chilean Peso

CLP

Czech Koruna KČ

CZK

Singapore Dollar

SGD

Indian Rupee

INR

Saudi Riyal

SAR

Vietnamese Dong

VND

Thai Baht

THB

Select Currency

-

US Dollar

USD-$

-

Chinese Yuan

CNY-¥

-

Japanese Yen

JPY-¥

-

South Korean Won

KRW -₩

-

New Taiwan Dollar

TWD-NT$

-

Canadian Dollar

CAD-$

-

Euro

EUR - €

-

Pound Sterling

GBP-£

-

Danish Krone

DKK-KR

-

Hong Kong Dollar

HKD- $

-

Australian Dollar

AUD-$

-

Brazilian Real

BRL -R$

-

Swiss Franc

CHF -FR

-

Chilean Peso

CLP-$

-

Czech Koruna KČ

CZK -KČ

-

Singapore Dollar

SGD-S$

-

Indian Rupee

INR -₹

-

Saudi Riyal

SAR -SAR

-

Vietnamese Dong

VND-₫

-

Thai Baht

THB -฿