-

Price: $0.15090 2.9605%

-

Market Cap: 22.92B 0.7601%

-

Volume (24h): 1.55B 0%

-

Dominance: 0.7601% 0.7601%

-

Price: $0.15090 2.9605%

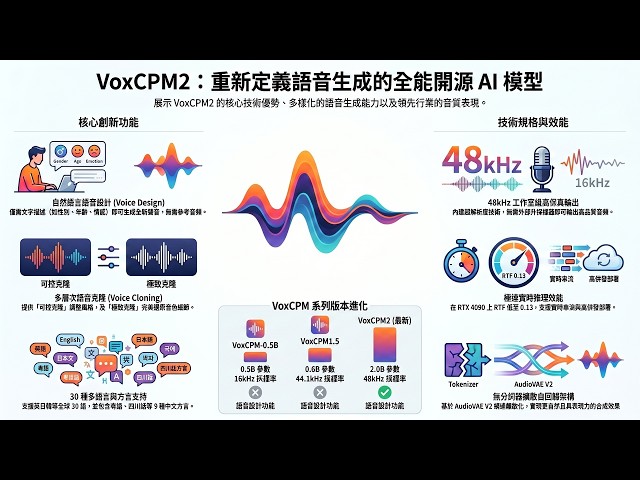

🎯 Hokkien dialect can also be dubbed by AI! 2B parameters beat 7B! VoxCPM2 fully analyzes thirty languages + nine dialects and uses smaller models to create more realistic sounds.

Release: 2026/05/11 04:51 Reading: 0

Original author:AI 論文白話文

Original source:https://www.youtube.com/embed/afnxVzN_ZF4

🎤 This open source speech model is a little different. What arouses discussion in the technical community is not scale, but architectural breakthroughs! VoxCPM2 — OpenBMB team: 🧠 Core breakthrough: Diffusion Autoregressive → Say goodbye to discrete tokens completely 🔊 Native 48kHz high-fidelity sound quality (no external upconverter required) 🌍 30 global languages + 9 Chinese dialects (including Hokkien!) 🎭 Voice design: pure text description → create new sounds out of thin air 🎯 Dual-track cloning: controllable cloning (flexible) + ultimate cloning (fidelity) 📊 Seed-TTS Benchmark: 2B parameters beat 7B Qwen2.5-Omni ⚡ RTF 0.13 (Nano-vLLM acceleration) → 7 times faster than human speech 💾 Minimum 8GB VRAM to run 🆓 Apache 2.0 completely open source and available for commercial use 💻 GitHub: https://github.com/OpenBMB/VoxCPM 🤗 HuggingFace: https://huggingface.co/openbmb/VoxCPM2 📄 Official documents: https://voxcpm.readthedocs.io/en/latest/models/voxcpm2.html [Chapter 1: Core Architecture Breakthrough] 0:00 - VoxCPM2 (OpenBMB): 2 billion parameters, more than 2 million hours of training, Apache 2.0 is completely open source and can be commercialized 0:18 - Diffusion autoregressive architecture: bid farewell to discrete tokens completely, use continuous speech representation to directly generate 48kHz native high-fidelity sound quality, without the need for external upconverters [Chapter 2: 30 languages + 9 dialects] 0:55 - Native support for 30 global languages, zero sample direct output (no language tag required) 1:31 - In-depth optimization of 9 Chinese dialects, covering "Minnan", which is rarely natively supported in the open source industry [Chapter 3: Voice Design (Pure text to create sounds)] 1:56 - A pure text description (such as gender/emotion/speech speed) can create a new voice out of thin air, without any reference to audio 2:19 - With strong context awareness, a text description is a dedicated mixer [Chapter 4: Dual-track voice cloning] 2:53 - Controllable cloning (16kHz audio + text): flexibly change emotions and speech speed, suitable for virtual anchors 3:22 - Ultimate cloning (audio + verbatim): based on audio continuation, 100% fidelity for every breath pause, suitable for voice repair [Chapter 5: Tokenizer Free's natural feeling] 3:53 - Say goodbye to the loss of acoustic details caused by forced segmentation of traditional tokens 4:18 - Operating directly in the continuous potential space, the waveform is smooth and natural, perfectly retaining the subtle ups and downs of human emotions [Chapter 6: AudioVAE V2 native upscaling] 4:46 - Asymmetric codec design, directly completing 16kHz to 48kHz super resolution within the model 5:12 - Abandoning the external Upsampler, reducing maintenance costs and failure points for enterprise deployment [Chapter 7: End-to-end five-stage inference] 5:22 - Input → Understanding (MiniCPM-4) → Generation (diffusion autoregressive) → Rendering (AudioVAE V2) → Output (0.13 RTF) to create a complete acoustic operating system [Chapter 8: Three generations of leapfrog evolution] 5:56 - Parameters upgraded from 0.5B to 2B, fully expanded to 30 Language and full-feature support 6:42 - LM Token Rate stably reduced to 6.25Hz to ensure ultimate performance [Chapter 9: Hardcore Benchmark data] 6:50 - Seed-TTS: 2B model (WER 1.84/SIM 75.3) beats 7B top open source model 7:29 - 30 Language ASR (internal test): the global average error rate is only 1.68% 8:13 - InstructTTSEval: The three major emotional command indicators dominate the list, proving its deep semantic understanding [Chapter 10: Extremely fast inference and deployment] 8:46 - Speed performance: PyTorch standard 0.30 RTF; Nano-vLLM accelerates to an astonishing 0.13 RTF 9:07 - Supports vLLM-Omni high-throughput deployment, providing OpenAI compatible API for perfect docking [Chapter 11: Fine-tuning and open source ecosystem] 9:20 - Only 5-10 minutes of audio can be used to perform SFT (deep) or LoRA (light) Fine-tuning to create exclusive sounds 10:04 - Covers server/edge/ComfyUI ecosystem, can run with a minimum of 8GB VRAM 10:43 - Must-test open source TTS options in 2026, highly recommended to go to the official Demo Verify personal scenarios━━━━━━━━━━━━━━━━━━━━━━ 📊 Core data (strictly based on SRT content) ━━━━━━━━━━━━━━━━━━━━━━ • Developer: OpenBMB Team • License: Apache 2.0 (fully open source, commercially available) • Architecture: Diffusion Autoregressive → Tokenizer Free • Backbone network: MiniCPM-4 • Parameters: 2B (2 billion) • Training data: Over 2 million hours of multilingual speech • Native sampling rate: 48kHz (Studio Quality, no external upconverter required) • Languages: 30 global languages • Dialects: 9 Chinese dialects (including Hokkien) • VRAM requirements: Minimum ~8GB • LM Token Rate: 6.25Hz Inference speed: Standard PyTorch: RTF 0.30 Nano-vLLM Acceleration: RTF 0.13 (about 7 times faster than humans) Four major functions: 1. Voice Design (Voice Design): pure text description → create sounds out of nothing 2. Controllable Clone (Controllable): reference audio + text → changeable emotion/speech speed 3. Ultimate Clone (Ultimate): reference audio + verbatim draft → perfect fidelity continuation 4. Zero-sample multilingual (Zero Shot): Automatic identification of core modules without language tags: LocEnc → TSLM → RALM → LocDiT → AudioVAE V2 (16→48kHz) Benchmark (data mentioned in SRT): Seed-TTS: WER 1.84 / SIM 75.3 (2B wins 7B Qwen2.5-Omni) 30 Language ASR: Global average error rate 1.68% English 0.42% / Chinese 0.92% / Arabic 1.23% / Indonesian 1.36% InstructTTSEval: APS 84.2 / DSD 83.2 / RP 71.4 (the highest in all three) ⚠️ 30 Language ASR is internal testing, non-third-party independent evaluation deployment integration: • Nano-vLLM / vLLM-Omni (high throughput server) • ONNX cross-platform/Apple Neural Engine • ComfyUI node • OpenAI compatible/v1/audio/speech API • LoRA fine-tuning (lightweight multi-timbral switching) Three generations of evolution: VoxCPM-0.5B (original generation): 0.5B / Chinese and English / 16kHz / 12.5Hz VoxCPM-1.5 (stable): 0.8B / Chinese-English / 44.1kHz / 6.25Hz VoxCPM2 (latest): 2B / 30 languages + 9 dialects / 48kHz / 6.25Hz ━━━━━━━━━━━━━━━━━━━━━━ 🎯 High-value application areas━━━━━━━━━━━━━━━━━━━━━━ ✅ Virtual anchor: controllable cloning + instant switching of multiple emotions ✅ Dynamic audiobook: emotional control + multiple roles ✅ Historical voice restoration: faithful restoration of ultimate cloning ✅ Speech continuation: Seamless continuation of specific styles ✅ Global products: 30 languages with zero samples ✅ Taiwan market: Hokkien native support (open source only) ✅ Enterprise exclusive voice: 5-10 minutes of data → LoRA fine-tuning → online ⚠️ GPU required (minimum ~8GB VRAM) ⚠️ Voice design results may be slightly different each time ⚠️ 30 language ASR For internal test data━━━━━━━━━━━━━━━━━━━━━━ 🎬 Demo experience method (only mentioned based on SRT) ━━━━━━━━━━━━━━━━━━━━━━ Suggestion at the end of the video: "Go and run the official Demo page and try it with your own usage scenario" 🔗 GitHub: https://github.com/OpenBMB/VoxCPM 🤗 HuggingFace: https://huggingface.co/openbmb/VoxCPM2 Local deployment: pip install voxcpm Demo script suggestions (strictly corresponding to SRT content): 1. Voice design: input "deep male voice, sad, slow speaking" + text → observe the effect out of nothing 2. Controllable cloning: provide 16kHz reference audio → change emotion and speaking speed → compare the original sound 3. Ultimate cloning: reference audio + Verbatim script → Verify the fidelity of every breath and pause 4. Zero-sample multilingual: No language tags → Directly enter Chinese/English/Japanese/French text → Observe automatic recognition 5. Hokkien test: Enter Hokkien text → Verify dialect quality Free materials (reference audio): • Just record 5-10 seconds of clear voice by yourself • Common Voice https://commonvoice.mozilla.org/ (multilingual voice data set) • LibriSpeech https://www.openslr.org/12/ (English Voice) #VoxCPM2 #OpenBMB #Speech Synthesis #TTS #DiffusionAutoregressive #TokenizerFree #48kHz #Voice Design #VoiceDesign #Voice Clone #30 Language #Min Nan Dialect #Chinese Dialect #MiniCPM4 #AudioVAE #LoRA #Open Source TTS #Apache2 #VoiceCloning #ZeroShot #VoiceAI ═══════════════════════════════════ ⚠️ Disclaimer ═══════════════════════════════════ This video is an educational open source project interpretation and technical review. The content is based on the OpenBMB official GitHub Repository, HuggingFace model card and technical documents. It is a direct translation of the unofficial original documents. The copyright of all code, model weights, benchmark data and quoted content belongs to the OpenBMB team and the open source community. Some benchmark tests are internal data and are not independent third-party evaluations. Please comply with local laws and regulations when using the voice cloning function and may not be used to defraud or impersonate others. Technical implementation is time-sensitive. If you have any questions, please be sure to refer to the official Repository for the latest information. 🔗 https://github.com/OpenBMB/VoxCPM

Recent news

MORE>>-

Electro Cubos

2026-05-11 14:50

Electro Cubos

2026-05-11 14:50

-

Zala2626

2026-05-11 14:38

Zala2626

2026-05-11 14:38

-

Thohir - block

2026-05-11 14:38

Thohir - block

2026-05-11 14:38

-

剧能充电站

2026-05-11 14:38

剧能充电站

2026-05-11 14:38

-

C-Minidrama

2026-05-11 14:38

C-Minidrama

2026-05-11 14:38

-

Sweety Theater

2026-05-11 14:16

Sweety Theater

2026-05-11 14:16

-

RushMiniDrama

2026-05-11 14:16

RushMiniDrama

2026-05-11 14:16

-

破晓动漫社 Dawn Anime Club

2026-05-11 14:16

破晓动漫社 Dawn Anime Club

2026-05-11 14:16

-

AI 論文白話文

2026-05-11 13:55

AI 論文白話文

2026-05-11 13:55

Selected Topics

-

- Dogecoin whale activity

- Get the latest insights into Dogecoin whale activities with our comprehensive analysis. Discover trends, patterns, and the impact of these whales on the Dogecoin market. Stay informed with our expert analysis and stay ahead in your cryptocurrency journey.

-

- Dogecoin Mining

- Dogecoin mining is the process of adding new blocks of transactions to the Dogecoin blockchain. Miners are rewarded with new Dogecoin for their work. This topic provides articles related to Dogecoin mining, including how to mine Dogecoin, the best mining hardware and software, and the profitability of Dogecoin mining.

-

- Spacex Starship Launch

- This topic provides articles related to SpaceX Starship launches, including launch dates, mission details, and launch status. Stay up to date on the latest SpaceX Starship launches with this informative and comprehensive resource.

-

- King of Memes: Dogecoin

- This topic provides articles related to the most popular memes, including "The King of Memes: Dogecoin." Memecoin has become a dominant player in the crypto space. These digital assets are popular for a variety of reasons. They drive the most innovative aspects of blockchain.

Selected Articles

More>>- 1 Arctic Pablo Coin (APC): Uncovering Earth's Mysteries, One Presale at a Time

- 2 New Highs For Dogecoin, Pepe and Shiba Inu In 2025 But This Crypto May Steal The Show With 10,000% Gains

- 3 Meme Coin Doge Uprising (DUP) Could 100x After President Donald Trump Announces Crypto Strategic Reserve

- 4 Dogecoin (DOGE) Price Prediction: Could Meme Coin Hit $10 by the End of 2025?

- 5 Cardano's Charles Hoskinson Has Made Some Notable Remarks About Bitcoin and Dogecoin

- 6 Cloud Mining Craze: Bitcoin, Dogecoin, and the Quest for Passive Crypto Income

- 7 Grayscale, Dogecoin, and the ETF Debut: A New Era for Meme Coins?

- 8 Rexas Finance (RXS) Could Surge 10x by 2025, Outpacing Pepe (PEPE)

- 9 Bitcoin Trader Who Made $30 Million On DOGE Predicts 6,000% Surge For This $0.0000002 AI Token – Get 80% Bonus Tokens

- 10 Dogecoin (DOGE) Price Prediction: Meme Coin Could Rally To $0.27 As It Closes Above Pre-Halving Highs

Select Currency

US Dollar

USD

Chinese Yuan

CNY

Japanese Yen

JPY

South Korean Won

KRW

New Taiwan Dollar

TWD

Canadian Dollar

CAD

Euro

EUR

Pound Sterling

GBP

Danish Krone

DKK

Hong Kong Dollar

HKD

Australian Dollar

AUD

Brazilian Real

BRL

Swiss Franc

CHF

Chilean Peso

CLP

Czech Koruna KČ

CZK

Singapore Dollar

SGD

Indian Rupee

INR

Saudi Riyal

SAR

Vietnamese Dong

VND

Thai Baht

THB

Select Currency

-

US Dollar

USD-$

-

Chinese Yuan

CNY-¥

-

Japanese Yen

JPY-¥

-

South Korean Won

KRW -₩

-

New Taiwan Dollar

TWD-NT$

-

Canadian Dollar

CAD-$

-

Euro

EUR - €

-

Pound Sterling

GBP-£

-

Danish Krone

DKK-KR

-

Hong Kong Dollar

HKD- $

-

Australian Dollar

AUD-$

-

Brazilian Real

BRL -R$

-

Swiss Franc

CHF -FR

-

Chilean Peso

CLP-$

-

Czech Koruna KČ

CZK -KČ

-

Singapore Dollar

SGD-S$

-

Indian Rupee

INR -₹

-

Saudi Riyal

SAR -SAR

-

Vietnamese Dong

VND-₫

-

Thai Baht

THB -฿